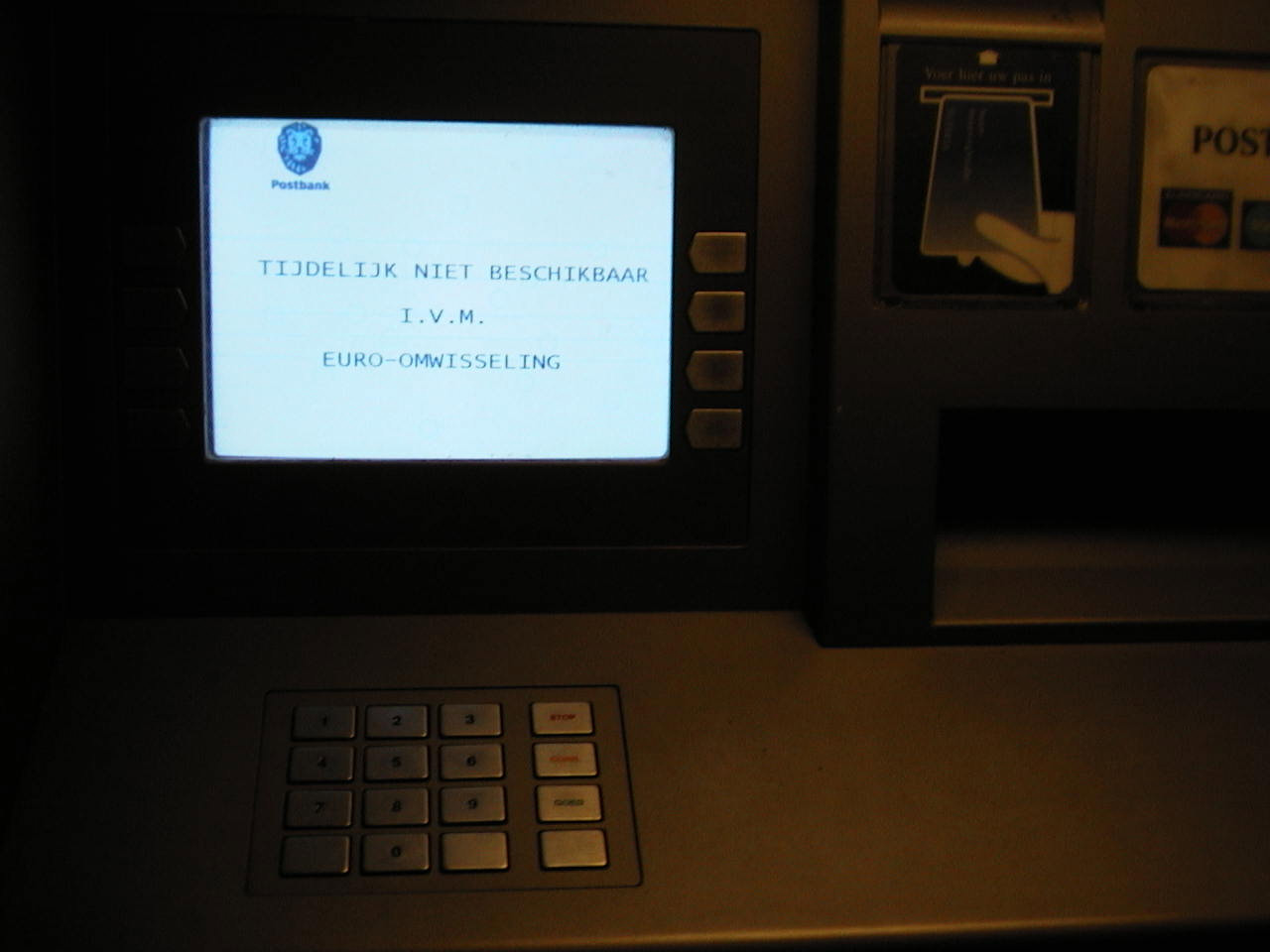

Image link - posted 2002-01-20

It seems more and more products that try to optimize BGP are reaching the market. One is the Radware Peerdirector. There is a whitepaper (PDF) about how the Peerdirector works on the site. This product monitors bandwidth use and reconfigures routers to change prefix advertisements to different peers to optimize how the available bandwidth is used.

Of course, such a product doesn't do anything a network manager can't do manually. However, periodically changing information in BGP introduces instabilities into the global routing system, which is undesirable at a minimum, and may even pose real harm under certain conditions.

Permalink - posted 2002-03-30

On February 12th, CERT published "CERTxae Advisory CA-2 002-03 Multiple Vulnerabilities in Many Implementations of the Simple Network Ma nagement Protocol (SNMP)".

Details haven't been published yet, but it seems it is possible to do all kinds of bad things by firing off non-spec SNMPv1 packets to boxes from many vendors.

Cisco has a security advisory about the problem. Cisco has a bad track record when SNMP security is concerned: in older IOS versions there were "hidden" SNMP communities that enabled pretty much anyone to manage the router. It seems this problem has resurfaced in another form: when you create a trap community, this automatically enables processing of incoming SNMP messages for this community, even though this community doesn't provide read or write access. However, this is enough to open the router for denial of service attacks. It is possible to apply an access list to the trap community, but this depends on the order in which the configuration is processed, so it will not survive a reboot.

The only way to be completely secure is to turn off SNMPv1 or filter incoming SNMP packets on the interfaces rather than at the time of SNMP processing. (Remember, this is UDP so the source addresses are easily spoofed.) Upgrading your IOS software image will also do the trick, as soon as they are available. Consult a certified Cisco IOS version specialist to help you find the right one (more than half of the advisory consists of a list of IOS versions).

Permalink - posted 2002-03-30

The second half of Februari saw two main topics on the NANOG list: DS3 performance and satellite latency. The long round trip times for satellite connections wreak havoc on TCP performance. In order to be able to utilize the available bandwidth, TCP needs to keep sending data without waiting for an acknowledgment for at least a full round trip time. Or in other words: TCP performance is limited to the window size multiplied by the round trip time. The TCP window (amount of data TCP will send before stopping and waiting for an acknowledgment) is limited by two factors: the send buffer on the sending system and the 16 bit window size field in the TCP header. So on a 600 ms RTT satellite link the maximum TCP performance is limited to 107 kilobytes per second (850 kbps) by the size of the header field, and if a sender uses a 16 kilobyte buffer (a fairly common size) this drops to as little as 27 kilobytes per second (215 kbps). Because of the TCP slow start mechanism, it takes several seconds to reach this speed as well. Fortunately, RFC 1323, TCP Extensions for High Performance introduces a "window scale" option to increase the TCP window to a maximum of 1 GB, if both ends of the connection allocate enough buffer space.

The other subject that received a lot of attention, the maximum usable bandwidth of a DS3/T3 line, is also related to TCP performance. When the line gets close to being fully utilized, short data bursts (which are very common in IP) will fill up the send queue. When the queue is full, additional incoming packets are discarded. This is called a "tail drop". If the TCP session which loses a packet doesn't support "fast retransmit", or if several packets from the same session are dropped, this TCP session will go into "slow start" and slow down a lot. This often happens to several TCP sessions at the same time, so those now all perform slow start at the same time. So they all reach the point where the line can't handle the traffic load at the same time, and another small burst will trigger another round of tail drops.

A possible solution is to use Random Early Detect (RED) queuing rather than First In, First Out (FIFO). RED will start dropping more and more packets as the queue fills up, to trigger TCP congestion avoidance and slow down the TCP sessions more gently. But this only works if there aren't (m)any tail drops, which is unlikely if there is only limited buffer space. Unfortunately, Cisco uses a default queue size of 40 packets. Queuing theory tells us this queue will be filled entirely (on average) at 97% line utilization. So at 97%, even a one packet burst will result in a tail drop. The solution is to increase the queue size, in addition to enabling RED. On a Cisco:

interface ATM0

random-detect

hold-queue 500 out

This gives RED the opportunity to start dropping individual packets long before the queue fills up entirely and tail drops occur. The price is a somewhat longer queuing delay. At 99% utilization, there will be an average of 98 packets in the queue, but at 45 Mbps this will only introduce a delay of 9 ms.

Permalink - posted 2002-03-31

CAIDA, the Cooperative Association for Internet Data Analysis, has an interesting page with geographical, demographical and many BGP-related statistics per continent and country. As expected, South America, Africa and Asia hardly show up, but there are some surprises. For instance, the US uses more than three times as many address as the whole of Europe.

Permalink - posted 2002-03-31

April fools day is coming up again! Don't let it catch you by surprise. Over the years, a number of RFCs have been published on April first, such as...

Read the article - posted 2002-04-01

In March, the NANOG list focussed some of its attention on efforts of the telephony world to com up with "best practices" about packet networks/the internet. (Network Reliability and Interoperability Council publications) In meeting minutes, 100% of the carriers reported to abide by these best practices, which include firewalling routers and DNS servers.

There were discussions about the merits of filtering/firewalling at OC-192 (10 Gbps) speeds (which, depending on your definition of "firewall" may be impossible to do) and about out-of-band versus in-band management. The former is always used in the telephony world, the latter often in IP. The main problem with out of band management is that the management network may be unavailable when the network is in critical need of being "managed". Also, many vendors do not support a clear separation between production and for-management interfaces.

Permalink - posted 2002-06-28

During the second week of April there was some discussion on reordering of packets on parallel links at Internet Exchanges. Equipment vendors try very hard to make sure this doesn't happen, but this has the risk that balancing traffic over parallel links doesn't work as good as it should. It is generally accepted that reordering leeds to inefficiency or even slowdowns in TCP implementations, but it seems unlikely reordering will happen much hosts are connected at the speed of the parallel links (ie, Gigabit Ethernet) or there is significant congestion.

Permalink - posted 2002-06-29

In the first week of May, a message was posted on the NANOG list by someone who had a dispute with one of his ISPs. When it became obvious this dispute wasn't going to be resolved, the ISP wasn't content with no longer providing any service, but they also contacted the other ISP this network connected to, and asked them to stop routing the /22 out of their range the (ex-)customer was using. The second ISP complied and the customer network was cut off from the internet. (This all happened on a sunday afternoon, so it is likely there is more to the story than what was posted on the NANOG list.)

The surprising thing was that many people on the list didn't think this was a very unreasonable thing to do. It is generally accepted that a network using an ISP's address space should stop using these addresses when it no longer connects to that ISP, but in the cases I have been involved with there was always a reasonable time to renumber. Obviously depending on such a grace period is a very dangerous thing to do. You have been warned.

Permalink - posted 2002-06-30

In de vroege jaren 2000 organiseerde BIT een aantal keer een openlucht-evenement in Edewaar mensen uit de Nederlandse ISP-business bij elkaar kwamen.

Read the article - posted 2002-08-23

O'Reilly, Sebastopol, CA, 2002. ISBN 0-596-00254-8

Iljitsch van Beijnum

(publisher) (Amazon) (Google preview)

On August 28th, AT&T had an outage in Chicago that affected a large part of their network. It took them two hours to fix this, and after this they released a fairly detailed description of what happened: network statements in the OSPF configuration of their backbone routers had been deleted by accident.

AT&T was praised by some on the NANOG list for their openness, but others were puzzled how a problem like this could have such wide spread repercussions. This evolved into a discussion about the merits of interior routing protocols. Alex Yuriev brought up the point that when IGPs fail, they do so in a very bad way, his conclusion being it's better to run without any. This led to some "static routing is stupid" remarks. However, it is possible to run a large network without an IGP and not rely on static routes. This should work as follows:

That way, you never talk (I)BGP with a router you're not directly connected to, so you don't need loopback routes to find BGP peers. Because of the next-hop-self on every session, you don't need "redistribute connected" either so you've eliminated the need for an IGP. Since the MED is increased at each hop, it functions exactly the same way as the OSPF or IS-IS cost and the shortest path is preferred.

Permalink - posted 2002-10-15

On August 15th, the Recording Industry Association of America (RIAA) filed a complaint against AT&T Broadband, Cable & Wireless USA, Sprint and UUNET, asking for a court order to make those networks to block an MP3 web site operated in China. BGP is even mentioned on page 11 of the complaint.

This, and other recent RIAA initiatives such as their plans to hack MP3 swapper's PC's, has made the RIAA very unpopular on the NANOG list. The pros and cons of blocking RIAA and record label web sites were discussed at length.

When the offending web site went offline, the RIAA dropped the lawsuit. But I'm sure the net hasn't seen the last of the RIAA lawyers.

Permalink - posted 2002-10-21

During the Asia Pacific Regional Internet Conference on Operational Technologies in March 2002, Geoff Huston presented An Examination of the Internet's BGP Table Behaviour in 2001. It seems the growth in the routing table slowed down considerably to 8%. The two previous years saw a 55% growth rate.

Yes, it took the news some time to reach BGPexpert. There are some other interesting presentations available on the APRICOT 2002 web site as well.

Permalink - posted 2002-10-25

The Computer Science and Engineering Department of the University of Washington is doing a study on BGP misconfiguration, which resulted in the paper Underst anding BGP Misconfiguration (postscript). The conclusion of this paper is that there is a lot of misconfiguration going on (200 to 1200 prefixes are affected each day), and it's not always human error.

Permalink - posted 2002-10-26

Stephen Gill has added Application Note: Securing BGP on Juniper Routers to his list of documents. (The list includes some other documents discussing BGP on Juniper routers, among other things.)

He spends a little too much time on filtering "bogons" (address space that isn't allocated or otherwise unroutable) in my opinion, but there is a lot of good stuff in there. Bogon filtering for input will buy you a 35% or so reduction in abusive traffic if you are under a DoS attack with randomly falsified source addresses. Bogon filtering for outbound traffic will buy you next to nothing since if you already do it for incoming, your servers won't have to reply to requests seeming to come from this address space so all that's left is hosts in your network deciding to send packets to bogon space themselves.

My remarks about bogon filtering have not gone unchallenged. Both Stephen Gill (Steve's site) and Rob Thomas (Rob's site) told me bogon filtering can be very useful to get rid of a significant percentage of abusive packets. Especially filtering on bogon source addresses is useful: this stops incoming packets with obviously falsified source addresses. Rob tells me this can be upwards of 50% of the abusive traffic for some attacks. Filtering on bogon destinations (which doesn't have any performance impact if you just route the bogon ranges to the null interface) gets rid of traffic hosts on your network or customer networks send to non-existent destinations. This traffic is usually scans (port scans or worms) but it can also be replies to incoming packets with bogon sources if those aren't filtered.

My main problem with bogon filtering is that you have to keep on top of new /8s assigned to the Regional Internet Registries (RIPE, ARIN, APNIC) by the IANA and change your filters accordingly. A better way to do this is with unicast RPF. If you use uRPF and run full routing without a default, all packets with source addresses for which there is no route in the routing table will be dropped so exit bogon sources. Bogon destinations get a "host unreachable" because without a default route the the router has no place to send them. However, the generally available version unicast RPF on Cisco routers breaks asymmetric routing, which is very common in ISP and multihomed networks. Cisco has improved the uRPF feature to get around this, but this improved uRPF isn't widely available across platforms and IOS images.

See Cisco's uRPF_Enhancement_4.pdf document for more information on the new uRPF capabilities. It seems Cisco doesn't like people linking to their stuff, so this link most likely doesn't work. If you have CCO access, you can probably find the document through "regular channels" and typing the name in a search engine will help you find find it elsewhere.

Permalink - posted 2002-10-27

At the end of September, the White House published a National Strategy to Secure Cyberspace. It seems that at the last moment, a lot of text was cut and the 60 odd pages PDF document offered for download was made a draft, with the government actively soliciting comments. One of the prime recommendations in the document is:

| R4-1 | A public-private partnership should refine and accelerate the adoption of improved security for Border Gateway Protocol, Internet Protocol, Domain Name System, and others. |

Some people say the government wants Secure BGP (S-BGP) to be adopted. It is unclear how reliable these claims are. In any event, S-BGP has been a draft for two years, with no sign of becoming an RFC or implementations being in the works.

In 2001 4th quarter interdomain routing news I ranted about the general problems with strong crypto in the routing system. It is widely assumed BGP is insecure because "anybody can inject any information into the global routing table." It is true that the protocol itself doesn't offer protection against abuse, but since BGP has many hooks for implementing policies, it is not a big problem to create filters that only allow announcements from customers or peers that are known to be good. However, the Routing Registries that are supposed to be the source of this information aren't always 100% accurate and although their security has greatly improved the last few years, it is not inconceivable that someone could enter false information in a routing database.

In an effort to make BGP more secure, S-BGP goes way overboard. Not only are BGP announcements supposed to be cryptographically signed (this wouldn't be the worst idea ever, although it remains to be seen whether it is really necessary), routers along the way are also supposed to sign the data. And the source gets to determine who may or may not announce the prefix any further. I see three main problems with this approach:

And even if all of these problems can be solved, it gets much, much harder to get a BGP announcement up and running. This will lead to unreachability while people are getting their certificates straightened out. Also, routers in colo facilities aren't the best place to store private keys.

Permalink - posted 2002-10-28

netVmg, a company selling products that optimize traffic flow for organizations with more than one connection to the net, didn't waste any time and announced a "webinar" on October 9th, promising to tell what happened and how to prevent it. I think they mean that if you use their products, your traffic is automatically rerouted around any black holes or congestion in ISP networks. Another company selling similar products, Route Science, had a spokesperson explain that BGP is pretty much brain dead in an Americas Network article.

What those companies conveniently fail to mention is that what their (expensive) products do automatically, can also be done by hand on any BGP router. But I guess as long as they keep the mis-information to a minimum and they don't inject instability into the global routing table by trying to micro-manage inbound traffic flows, their products are harmless and serve a need.

Check out netVmg's newsletter called The Best Path for not-too-technical discussions about the benefits of multihoming with the marketese limited to reasonable levels.

Permalink - posted 2002-10-29

On October 4th, Worldcom/UUNET had a major outage. Worldcom attributes the problems to "a route table issue". It is still unclear what happened, but the rumors indicate a problem similar to the one that AT&T experienced in August: something went wrong while managing the routers, but this time it wasn't a configuration problem, but the problems started after loading a different Cisco IOS image in a large number of routers.

Permalink - posted 2002-10-30

I'm a bit behind on the news. The most important IDR news is that of the DoS-attacks on the root nameservers on October 21st. (There will be more on this in the tech list news soon.) By some strange coincedence, I had just put a page outlining anti-denial-of-service measures up on this site. I've been working on this since before the summer, but I hadn't yet really published the story on this site since I was considering publishing it somewhere else and I have been unable to test how good it works.

Permalink - posted 2002-10-31

Image link - posted 2002-11-15

Image link - posted 2002-12-17